Meta's Andromeda AI doesn't care about your audience segmentation. It cares about your creative. The algorithm reads the visual, the copy, the tone, the format, and it decides who sees it. Your audience isn't something you build in Ads Manager anymore. Your audience is a function of what your creative says and who it resonates with.

If your creative is generic, your audience will be too. If your creative speaks to a specific person with a specific problem, the algorithm will find that person at scale.

This is the shift most agencies haven't made. And it's why most DTC brands are leaving new customer growth on the table.

The Real Reason Your Meta Campaigns Hit a Ceiling

You've probably experienced this: an ad works. You scale it. Performance drops. You make a new version of the same ad. Rinse, repeat, and plateau.

The diagnosis most agencies give you is audience fatigue. The real diagnosis is creative poverty.

When you only test minor variations: swap the headline, change the color, and cut five seconds from the video. You're not actually learning anything. You're running the same hypothesis in a slightly different costume. The algorithm has already told you everything it can about that idea.

What it hasn't told you yet is whether a completely different idea: a different persona, a different emotional entry point, and a different problem framing—would unlock a pocket of new customers that your current creative has never reached.

That's the question high-variance creative testing is designed to answer.

What High-Variance Creative Testing Actually Means

Here's the distinction that matters: testing variations vs. testing concepts.

Testing variations looks like this:

- Version A: Blue background

- Version B: Yellow background

- Version C: Same hook, different CTA

Testing concepts looks like this:

- Concept A: Ad targeting the performance-obsessed athlete who reads every ingredient label and is skeptical of mainstream sports drinks

- Concept B: Ad targeting the weekend warrior who's been cramping on long runs and doesn't know why

- Concept C: Ad targeting the clean-ingredient consumer who applies the same standards to their sports nutrition that they apply to their food

These are fundamentally different people. They need fundamentally different messages. They respond to fundamentally different proof structures, emotional triggers, and calls to action.

If you're only testing variations, you're optimizing within one idea. If you're testing concepts, you're discovering which ideas are even worth optimizing.

The Creative Matrix: How We Structure Testing at Scale

Before we write a single word of copy or brief a single asset, we build a creative matrix.

The matrix has three dimensions:

1. Message Angle What is this ad's core argument?

- Pain point: You're experiencing cramping, bonking, GI distress. Here's why and here's what fixes it

- Desire: Race faster. Recover cleaner. Feel better on mile 18 than mile 4.

- Ingredient transparency: Here's exactly what's in our product, and here's exactly what isn't

- Social proof: 50,000+ athletes trust this. Here's what they say.

- Founder story: A sports dietitian created this for pro athletes because the mainstream options were junk

- Skeptic conversion: Why serious athletes stopped using the big brands

2. Funnel Stage Where is this person in their awareness journey?

- Problem-Unaware: They don't know they have the problem yet. Lead with education and curiosity.

- Problem-Aware: They know the problem, not the solution. Lead with validation and empathy.

- Solution-Aware: They're comparing options. Lead with differentiation and proof.

- Most-Aware: They know your brand. Lead with urgency, social proof, and the offer.

3. Format How is the idea delivered?

- Static image: Best for rapid testing and sharp persona-specific messaging

- Short-form video (15 to 30 seconds): Hook-driven, emotion-led

- UGC-style video: Community-native, authentic, trust-building

- Creator whitelist content: Paid reach with organic trust

A 3×3×3 matrix produces 27 genuinely distinct tests. Not 27 variations of one idea. 27 different hypotheses about who your customer is and what will move them to buy.

No two cells should look alike. If they do, you're testing variations again.

The Persona Framework: Why "Target Audience" Is Not Enough

Most brands target based on demographics and interests. Age, location, household income, and "fitness enthusiasts." This is the information the algorithm already has. Giving it back to the algorithm in the form of audience constraints doesn't help. It limits.

What the algorithm needs is a signal about who specifically responds to which specific message. That signal comes from creative that speaks precisely to one type of person.

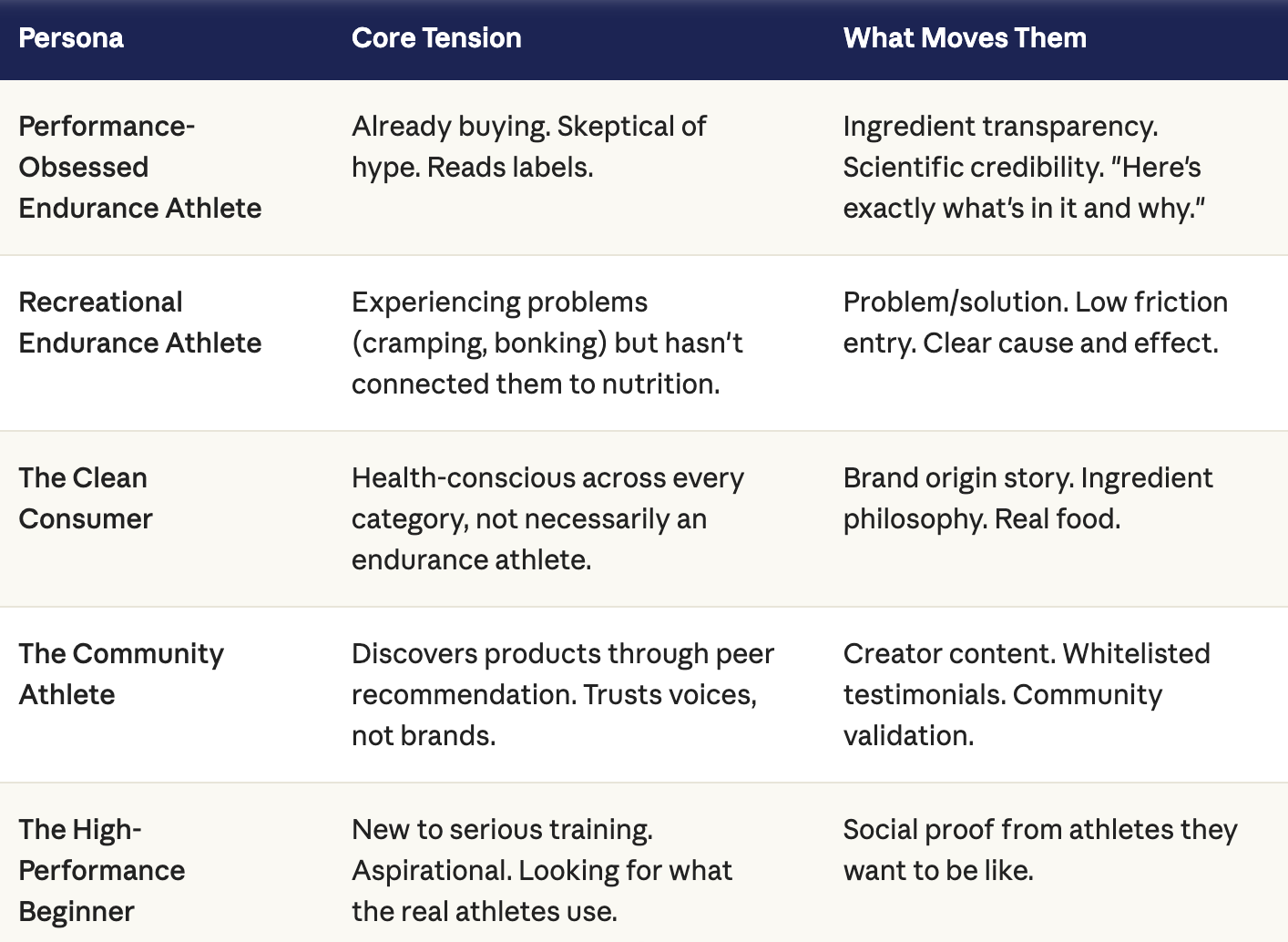

Here's an example of how this plays out for an endurance sports nutrition brand:

Each of these personas gets its own creative brief, its own landing page, and its own offer. They are separate acquisition funnels, not audience segments within a single campaign.

The brands that crack new customer acquisition at scale do it by building persona-specific systems, not by running one campaign to everyone.

What Good Creative Testing Infrastructure Looks Like

Here's the operational reality: you can have the right framework and still execute it poorly.

Creative testing fails when:

- There aren't enough concepts in the test. If you're launching three to five ads at a time, you're not testing. You're guessing slowly. A real testing framework puts meaningful volume into enough concepts to let the algorithm find what works. Aim for 20 to 30 unique assets per month, minimum, in the early stages of a program.

- You pull the plug too early. New creative needs time to gather signal before you can draw conclusions. Cutting a concept after three days of data isn't optimization. It's noise. Let the algorithm learn.

- You optimize based on platform ROAS. Platform attribution is a directional signal, not ground truth. A creative that appears to have lower ROAS might be generating more verified new-to-file customers as measured through Shopify. The metric you're optimizing for determines what you find.

- The winning creative never gets documented. This is the most common failure mode. An ad works, you scale it, it eventually fatigues, and no one has written down why it worked. Was it the persona? The angle? The format? The hook? Without documentation, every testing cycle starts from scratch instead of compounding on what you've already learned.

By day 90 of a well-run testing program, you should have a documented creative playbook: specific angles, specific personas, and specific formats that generate incremental new customers. That playbook is the asset. Not the ads themselves. The knowledge underneath them.

The Compounding Effect of Creative Intelligence

Here's the thing most brands miss about creative testing: the point isn't just to find the winning ad. The point is to build an unfair advantage in your category.

When you've run 200+ concepts over 12 months, you know things about your customer that your competitors can't buy. You know which emotional entry points unlock demand. You know which objections kill conversion. You know which proof structures earn trust from skeptics. You know which format works at which stage of the funnel.

That knowledge compounds. Every test makes the next test smarter. Every winning concept teaches you something about the next brief. And every persona you crack opens a new pocket of scale that your competitors don't even know exists.

The brands that win on paid social in 2026 aren't winning because they spend more. They're winning because they know more, and they built a system to keep learning.

What This Means For Your Brand Right Now

If you're running Meta and you haven't systematically tested across fundamentally different personas and message angles, the most important question you can ask is: which customers have I never spoken to, and what would it take to get their attention?

The answer is probably sitting in an untested creative concept. And the only way to find it is to build the matrix, launch the tests, and let the data tell you what your intuition can't.

That's what demand generation actually looks like in 2026. Not better targeting. Better creative.

H Street Digital is a performance marketing agency focused on scaling new customer acquisition for DTC outdoor brands. If you want to see how a creative testing program would apply to your specific brand and category, we'd be glad to take a look.